Retrieval Augmented Generation (RAG) On Google Cloud

A comprehensive breakdown of available services and recommended architectures by use case

This article was written by me and cross posted on the Zencore Engineering blog. Zencore is a cloud consulting and engineering firm led by a team of former Google engineers and executives.

Overview

Google Cloud offers many different options to build Retrieval Augmented Generation (RAG) powered applications. This includes discrete components that comprise RAG solutions (embeddings, vector search, LLMs) but also includes options that combine multiple steps or even the entire RAG application in a single service. The best option for you will depend on factors such as your use case, engineering expertise, existing tech stack and future needs.

We will start with a set of use cases and design a solution architecture using the most appropriate options. After that we will go through a detailed breakdown of the full list of services with the pros, cons and recommendations for when to use each.

Disclaimer: These services are all under active development so expect that these details may change over time.

Use Cases

Let’s consider a few different use cases and what an appropriate architecture might look like for each. This is not a comprehensive list of use cases but rather illustrative examples.

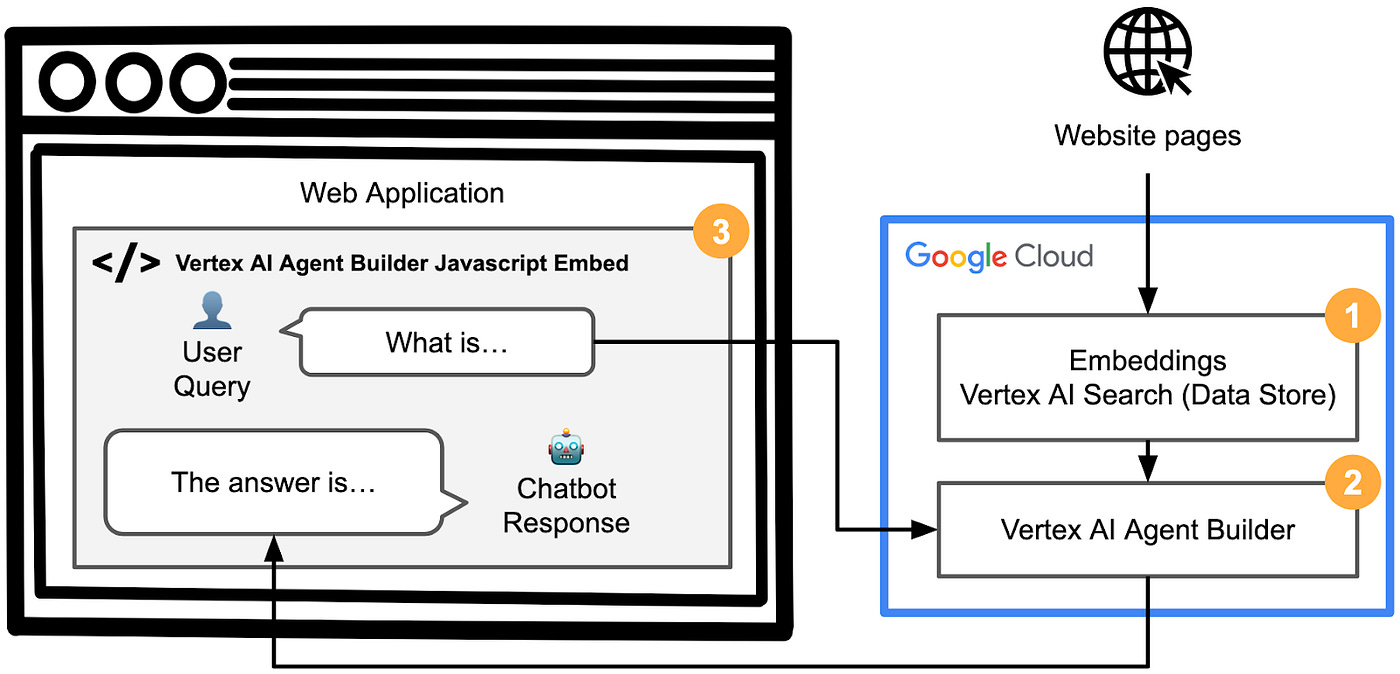

Use Case #1 | No-Code Educational Chatbot

Description: We would like to have a chatbot added on our website homepage to answer questions based on a series of educational articles hosted on our website. We do not have a large front end or backend engineering team.

Recommendation: Vertex AI Agent Builder offers a no code solution to ingest web pages into a data store, build a chatbot connected to that data store and host that chatbot on your website.

Architecture:

Steps:

Create a Vertex AI Search data store to crawl and embed the web pages automatically

Create a Vertex AI Agent Builder app and connect to the data store

Use the Agent website Javascript embed feature to add to an existing web page

Use Case #2 | Enhance a Custom Built Chatbot

Description: We have an existing web based custom built chatbot powered by Gemini Pro 1.0. We would like to add the ability for users to select from a list of data corpus and then ask questions about the documents in that corpus. What’s the easiest way to enable this?

Recommendation: Vertex AI Grounding is the easiest way to add document retrieval to an existing Gemini based chatbot.

Architecture:

Steps:

Upload each collection of documents into separate folders or buckets in Cloud Storage

Ingest each set of documents as a separate Vertex AI Search data stores

Add your list of data stores as a selectable dropdown menu to your chatbot UI

When the user makes a chatbot request with associated data store, set grounding on that data store and process the request

Use Case #3 | Literature Study Assistant Chatbot

Description: Our literature website hosts free classical literature that is in the public domain (e.g. the complete works of William Shakespeare). We want to build a study assistant where users can ask detailed questions about characters, events and interpretation about individual books. An example of a question could be “What was different about character A in chapter 1 compared to chapter 10?”

Recommendation: Use the extended 1M+ token context window of Gemini Pro 1.5 to pass entire books in the LLMs context window in order to generate superior results compared to a typical embedding model.

Architecture:

Steps:

Upload each Book into Cloud Storage

Add your list of books as a selectable dropdown menu to your chatbot UI

When the user makes a chatbot request with associated book, pass the entire book as context to help answer each question

Use Case #4 | AI Art Website Advanced Image Search

Description: Our AI art website has nearly ten million images and receives over one million unique visitors per day. We have a feature to filter images by image attributes such as category, size, tags and more. The images are stored on Cloud Storage and the attributes in a PostgreSQL database. We would like to add a feature to allow users to upload an image to find similar images and to filter by image attributes.

Recommendation: Use a combination of Vertex AI Multimodal Embeddings to embed your images, store those embeddings in pgvector in AlloyDB (10x vector search performance over standard PostgreSQL) and query AlloyDB for image similarity and image filters.

Architecture:

Steps:

Migrate your PostgreSQL table that stores your image attributes to AlloyDB, a PostgreSQL compatible database

Add a vector store column (type pgvector) to your AlloyDB table

Use the Vertex AI Multimodal Embeddings API to embed all of your images and store in the pgvector column in AlloyDB

When a website image similarity search request is made, embed the image, perform a vector similarity search and filter by image attributes, all as a single query within AlloyDB. Then return the top similar images.

Service Details

Embeddings

Vertex AI Text Embeddings

About:

Compatible with text input only

Supervised parameter efficient tuning is available

Input: Priced per input characters, with a discount for batch requests

Output: No charge

1,500 requests/minute

Pros:

Simple, easy to use API

Cons:

2,000 token input limit is relatively small

Generates single 768-dimensional vector per embedding with no custom sizing. This may be limiting.

Open source alternatives are abundant and generally inexpensive to host

Recommended for:

If you do not want to manage open source models and have limited customization needs

Vertex AI Multimodal Embeddings

About:

Compatible with text, image and video input

Input: Priced per input characters, image and per video second (with varying fps options)

Output: No charge

120 requests/minute

Pros:

Native video embedding is very convenient and the varying video embed options can help to control costs

Generates default 1408-dimensional vector per embedding. You can also specify lower-dimension embeddings (128, 256, or 512 float vectors) for text and image data.

Cons:

No tuning options

32 token text input limit is very restrictive

Recommended for:

Image (and especially video) if similarity search quality is important

Vector Search

Vertex AI Vector Search

About:

Dedicated vector similarity search database based on Google Research’s ANN / ScaNN techniques

Size your deployment by selecting from various VM machine and cluster sizes

Hybrid Search (dense+sparse) announced at Google Cloud Next ’24 and is being prepared for public preview

Pricing is determined by the size of your data, the amount of queries per second (QPS) you want to run, and the number of nodes you use

Quotas:

Request the necessary quotas per VM type and region before deploying

Pros:

Best in class vector search for speed and scalability. Scale up to billions of entries and generate results in single milliseconds.

Cons:

Can be expensive at scale

Primitive API and SDK. I recommend leaning heavily on Python helper functions.

Requires boot up time for underlying VM cluster, which are always on

Separate from the rest of your data, and while you can do basic query filtering, it will be limited compared to a traditional database

Recommended for:

Highly performant and at scale use cases only

BigQuery Vector Search

About:

Search options: ANN or exact

Use the BigQuery native command ML.GENERATE_EMBEDDING to create an embedding right within BigQuery. This utilizes the Vertex Text Embeddings API under the hood.

Use the BigQuery native statement CREATE VECTOR INDEX to create the vector index and VECTOR_SEARCH to search.

Uses standard compute pricing

There is no charge for the processing required to build and refresh your vector indexes when the total size of indexed table data in your organization is below the 20 TB limit

Tables must be between 5,000–1B rows

Other very specific restrictions apply

Pros:

Generate and store embeddings and perform vector search all self contained within BigQuery

Combine vector search with joins and filters on your related data in a single step

Pricing should be very competitive for small to moderate BigQuery data sizes and query frequency

Cons:

Preview state

There are various table and row size restrictions (see quotas above)

Recommended for:

If BigQuery is your primary datastore and you need to join and filter search results against related data or you only need basic similarity search functionality

CloudSQL for PostgreSQL (pgvector)

About:

Google Cloud’s managed PostgreSQL database

Utilize the built in open source pgvector functionality

pgvector offers various search options and supports up to 2,000 dimensional vectors

Standard CloudSQL for PostgreSQL pricing applies

Standard CloudSQL for PostgreSQL quotas

Pros:

Combine vector search and joins and filters in a single step

Cons:

Embeddings must be generated separately

Recommended for:

If PostgreSQL is your primary datastore and you are performing batch lookups that are not performance dependent

AlloyDB for PostgreSQL (pgvector)

About:

Google Cloud’s managed PostgreSQL compatible database offering better performance

Utilize the built in open source pgvector extension

pgvector offers various search options and supports up to 2,000 dimensional vectors

Standard AlloyDB pricing applies

Standard AlloyDB quotas

Pros:

Combine vector search and joins and filters in a single step

10x vector search performance compared to CloudSQL for PostgreSQL

Cons:

Embeddings must be generated separately

AlloyDB is about 40% more expensive than CloudSQL for PostgreSQL

Recommended for:

If PostgreSQL is your primary datastore and you are performing interactive interactive lookups that are performance dependent then choose AlloyDB over CloudSQL for PostgreSQL

Spanner Vector Search

About:

Implement embedding and vector search right within Spanner via custom code (see examples provided in official Google Cloud documentation).

Standard Spanner, embeddings API and Spanner custom ML model pricing applies

Standard Spanner quotas apply

Pros:

Generate and store embeddings and perform vector search all self contained in Spanner

Cons:

Preview state

Implementation is done via custom code versus a formally productized solution

Recommended for:

If Spanner is your primary datastore and you need to join and filter search results against related data

Grounding, RAG & Agent Solutions

Vertex AI Agent Builder

About:

Formerly branded as Gen App Builder and then later Search & Conversation, Vertex AI Agent Builder offers end-to-end apps or per component features to build custom search indexes, recommendation engines, chatbots and agents.

App types:

Search: Get quality results out-of-the-box and easily customize the engine

Chat: Answer complex questions out-of-the-box

Recommendations: Create a content recommendation engine

Agent: Built using natural language, agents can answer questions from data, connect with business systems through tools, and more

Data stores:

About: Vertex AI Search (data stores) is an all in one managed service to ingest different types of assets, create embeddings and function as a vector database for search

Data sources: Create a data store based on files in Cloud Storage or Google Drive, by indexing your website, from BigQuery and more via the cloud console or API

Data types: Ideal for unstructured data (PDFs, HTML, TXT) but also compatible with different structured data formats as well (with many limitations)

Usage: Data stores can be connected to any of the Agent Builder app types, used as a source for Vertex AI Grounding or used in any custom fashion via API

You can use your own custom embeddings

Pay separately for app query, data store query and per data store index size. Use the estimateDataSize method to calculate expected index size.

Various Agent Builder quotas and limits apply such as document count and query frequency

Pros:

Full UI creation (in addition to API), so this can be helpful for teams with limited engineering resources

Provides an embeddable web UI code designed for each app type

Flexibility to use one or more components discretely or combine for a full end-to-end solution

Cons:

Preview state

Each component has pre-selected options across embeddings, vector search and LLM options with sometimes limited options

It can be relatively pricey

Understanding the full set of rapidly changing products and features can be confusing (see the constant rebranding)

Recommended for:

Easiest no code way to build a RAG retriever (embeddings and vector search) and RAG based chatbot with embeddable UI

Vertex AI Grounding

About:

Select a preexisting Vertex AI Search data source (enterprise edition) and your model (Gemini) and it performs RAG automatically

See Ground to your own data for more information

Pricing:

Gemini 1.0 Pro — pay per character input/output

Vertex AI Search (enterprise edition) — pay for data indexing and per query

Quotas:

See Search data source quotas for number of documents to index and query volume

Pros:

Can be enabled right within the Vertex AI Gemini prompting UI and easily added to Gemini API calls

Reuse data stores across Grounding, Agent Builder and Search options (some restrictions will apply)

Cons:

Preview state

Only available for Gemini 1.0 Pro today

Limits potential for customization e.g. hybrid search

Recommended for:

Easiest way to add basic RAG to existing Gemini chatbots and custom codebases

LangChain on Vertex AI

About:

Based on LangChain, a very popular open source library, Google Cloud offers a custom SDK with LangChain integrations

LangChain supports general purpose LLM based application, so it can work for RAG use cases plus many more

Pricing:

N/A

Quotas:

N/A

Pros:

Developers already familiar with LangChain can get started with Google Cloud integrated LangChain services quickly

Extensive set of helper functions and code examples

Has connectors across both Google Cloud and non-Google Cloud services, which makes it is easy to swap out services as needed

Cons:

Preview state

Pure Python implementation, so engineering team must fully implement and maintain

For LangChain in general, there can be differences in versions and features/parameters between the LangChain library and the direct Google Cloud equivalent, with questionable error handling. This can make it harder to optimize and debug compared to using the direct APIs and services.

It is unclear what advantages most users will have using LangChain on Vertex AI versus the regular open source implementation of LangChain

Recommended for:

Teams experienced with LangChain and / or teams with existing LangChain code that will be easy to port to integrate with Google Cloud

Vertex AI RAG (Llamaindex)

About:

Based on Llamaindex, an open source framework purpose built to handle retrieval style applications

Add your documents, select a model (Gemini), and it performs RAG automatically

Document sources: single file, Cloud Storage and Google Drive

Pricing:

N/A

Quotas are present across data ingestion, data management and retrieval

Pros:

Developers already familiar with Llamaindex can get started with Google Cloud integrated Llamaindex services quickly

Extensive set of helper functions and code examples

Has connectors across both Google Cloud and non-Google Cloud services, which makes it is easy to swap out services as needed

Cons:

Preview state

Pure Python implementation, so engineering team must fully implement and maintain

Recommended for:

Teams experienced with Llamaindex working specifically on RAG style projects

Other Options

Gemini 1.5 Pro — Full document analysis using 1M+ token context window

About:

Gemini 1.5 Pro is Google’s latest foundation model available via API and the cloud console

New to Gemini 1.5 Pro is an extra large context window of 1 million (soon to be 2 million) tokens. This allows for very large text documents (and other input types) to be processed at runtime.

As an alternative to RAG, provide full documents as context. This should generally outperform RAG.

For multi-document use cases, a document <-> user query lookup will be required. The Document Ranking API can help solve for this.

Pricing is constantly changing, so check out the latest at the link provided.

Input: Priced per text character, per image, per video second and per audio second

Output: Priced per character

As of this writing, Gemini 1.5 Pro has a very low 5 queries/minute default quota. This should increase over time closer to the current Gemini 1.0 Pro quota (300/queries/minute).

Pros:

Better recall performance than RAG — 99% accuracy. This is a huge improvement over prior generation LLMs where recall suffered as context length increased.

Removes the need for the complexity of implementing RAG (depending on use case)

Cons:

Preview state with very low default quota

Pay-per-token pricing means that many requests with large context length may get quite expensive

Response times increase linearly with context length. This could lead to worse response times compared to RAG.

Recommended for:

Cases where queries are generally about single documents that are all smaller than the model token limit and precision matters more than inference time